Millions of young people worldwide struggle with alcohol and other substance use, yet seeking help can feel daunting—and timely, evidence-based information and care can be difficult to access. Persistent barriers, including stigma, confidentiality concerns, online misinformation, cost, long wait times, and fragmented services, can delay access to early support.

Substance use rarely occurs in isolation; it is often intertwined with co-occurring mental health symptoms, emotional distress, physical health concerns, and broader inequities in access to care. Despite these layered needs, young people often encounter fragmented, adult-centred systems that are difficult to navigate. Fear of judgment, loss of privacy, or being misunderstood can push even highly motivated individuals to cope alone. In that context, self-medication can offer short-term relief, but it may also contribute to escalating use and worsening mental health symptoms over time, making recovery harder to sustain.

There is no shortage of AI hype right now (and hysteria), but we were keen to lean in and leverage the “good” parts of AI technology while eliminating or limiting the “bad” parts. As a result, we sought to develop an AI conversational agent that draws on a curated, expert-reviewed evidence base (rather than open web-generated content), while feeling approachable, supportive, and judgment-free. We also wanted to go well beyond “just another generic chatbot” and create something that’s innovative from both clinical and public health perspectives, while making the needs, values, and preferences of end users the top priority (non-negotiable in our view).

What Is JARA and Why Did We Build It?

The AI conversational agent we’re building is called “JARA” (JAR-UH), which stands for Judgment-free Assessment & Resource Assistant. As of the date of writing, it is a prototype conversational AI agent designed to target and support youth and young adults (18+) who use or are thinking about using substances or alcohol. Through plain-language dialogue, JARA helps users set any health goal, ranging from information to harm reduction, prevention, moderation, recovery, or abstinence. It can leverage AI to suggest tailored next steps, including guidance, information, reminders, and relevant services. JARA can answer questions ranging from “What is the best way to cut back on drinking beer on the weekends?” to “I am struggling with opioid use, and feel sick if I stop using, where can I go to get help right away without feeling judged?” to “I am trying cannabis but am not sure what the different dosages of THC mean”.

It is also being developed with family members in mind who may want to seek scientifically-backed information to support a loved one but are too afraid to reach out. Early identification and information are critical when we are talking about saving lives and preventing substance use disorders. As someone who has worked in this space for a long time and worked in both recovery and harm reduction, it is far preferable to intervene early as opposed to wait until “rock bottom” hits. Rock bottom often translates to lifelong injury, time behind bars, loss of employment, or even death.

JARA is named in memory of my friend Jared, who passed away in 2019 from an unintentional drug poisoning. Jared was very open about his substance use and generously supported my doctoral research as a peer advisor. Despite actively seeking help, he couldn’t access timely, evidence-based care and was left to self-medicate alone.

In the addiction space, there’s often debate about which interventions or models of care are most effective. But in reality, several approaches might have helped Jared—if only they had been accessible at the right time, in the right place. That’s what inspired the idea behind JARA: how can we make trusted, evidence-based information and care more timely and accessible, no matter where someone is in their journey?

How JARA Works

The JARA prototype currently operates through a chat/text interface, and users receive nonjudgmental, personalized guidance aligned with their self-identified goals, including seeking information, harm reduction support, moderation, recovery coaching, relapse risk awareness and prevention, and help with navigating complex mental health systems. Future iterations will also include voice-to-voice capabilities. JARA uses a person-centred conversational flow, informed in part by the Transtheoretical Model of Behaviour Change, to help tailor support to a user’s readiness for change. It begins by setting clear expectations about what it can and cannot do, including explicit boundaries (e.g., it is not a substitute for professional care). Then, through structured but non-directive prompts, it helps users reflect on their current goals and options, and provides evidence-informed guidance accordingly. This guidance is framed through a shared decision-making approach that considers contextual risks and the user’s values, as well as recommendations from the curated knowledge base.

JARA emphasizes emotional safety, autonomy, and transparency. It avoids assumptions, false certainty, and automatic agreement; when appropriate, it uses reflective check-ins and gentle reality-testing to broaden perspective and encourage reflection. To reduce the risk of over-validation, JARA employs a multi-agent workflow in which one agent generates a draft response and another independently reviews it for tone, balance, and safety, flagging responses that are overly affirming, insufficiently reflective, or inconsistent with the curated evidence base.

Where appropriate (and only with the user’s consent), JARA can also incorporate brief, validated screening questionnaires to support structured self-reflection and summarization, including substance use tools such as the AUDIT, DAST-10, and DUDIT. Results can be displayed on a user-facing dashboard and, only with explicit consent, shared via a clinician-facing dashboard. The team is also exploring validated measures for holistic mental health and recovery outcomes, including depression, anxiety, quality of life, functional status, sleep, and recovery capital. These tools are intended to complement conversation (not replace clinical assessment) and can help users track change over time and identify when seeking professional care may be needed.

JARA provides evidence-informed resources and psychoeducation delivered in supportive, stigma-free language. It also includes built-in safeguards to detect signs of acute risk, such as potential self-harm or interpersonal violence. In such cases, JARA responds with clear, respectful referrals to crisis services or appropriate human supports. All interactions prioritize user consent, and no identifiable data is stored or shared; any data used for evaluation is collected with explicit consent and with appropriate safeguards.

JARA’s content is grounded in a curated knowledge base of peer-reviewed literature, reviewed by experts in addiction medicine, harm reduction, mental health, and lived experience. To reduce misinformation, the system is designed to stay within validated content and seek clarification when unsure, supported by independent review steps before a response is delivered. By offering an approachable, confidential space, JARA aims to bridge the gap from early awareness and intervention to formal care by making the first step toward change feel possible. JARA is being developed through a staged research and implementation process grounded in the WHO Digital Health Evaluation Framework, with a strong focus on co-design. Youth, caregivers, clinicians, and health system leaders have been engaged from the beginning to ensure JARA is safe, relevant, and practical in real-world contexts.

How JARA Differs from Other Emerging Gen-AI tools

Many Gen-AI mental health tools emphasize open-ended conversation. JARA is designed to complement this landscape by focusing specifically on substance and alcohol use among youth and young adults (18 to 29 years old), and by supporting early identification and linkage to evidence-based information, support and care. In addition to conversation, JARA integrates brief validated screening, structured progress tracking, and user- and clinician-facing dashboards (with explicit consent) to support follow-up and care pathway integration. JARA also uses a multi-agent architecture, enabling independent review of responses for accuracy, tone, and safety to help reduce risks such as over-validation. Within the next month or so, we are finalizing a scoping review and early market research to map this rapidly evolving space, and JARA will continue to evolve in response to emerging evidence, user feedback, and implementation needs.

How Will We Know It Works?

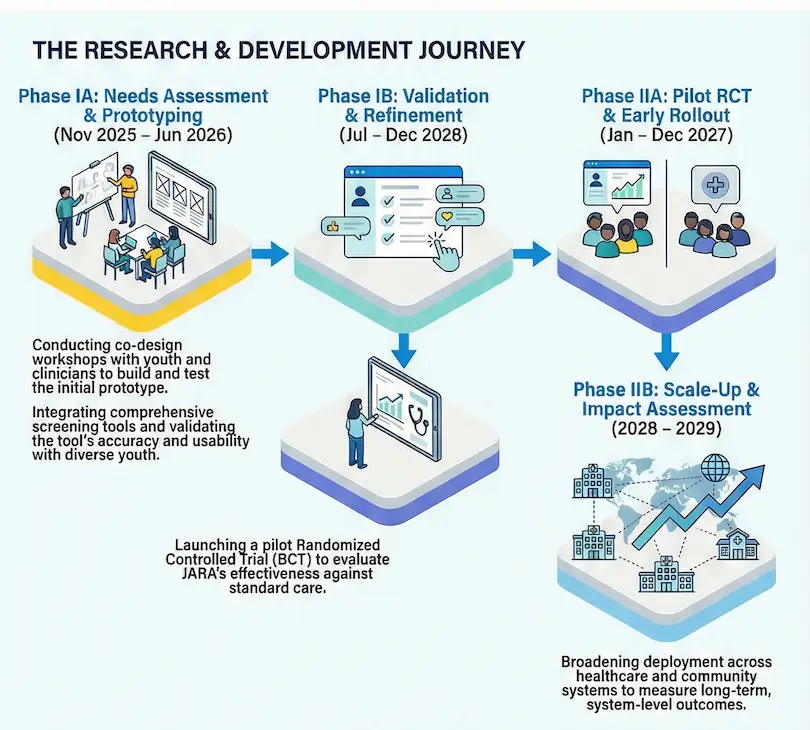

In January 2025, we initiated a multiphase research and evaluation process. In Phase 0 (2025), we focused on foundational research and building a multidisciplinary team. This included evidence mapping, early market positioning, and extensive stakeholder consultation. A scoping review of conversational agents for substance use is nearing completion, and early market research has clarified adoption pathways and partnership models.

Pending ethics approval from the University of Alberta, the project will proceed to Phase I, divided into two sub-phases. Phase IA will assess usability, feasibility, and implementation readiness through online surveys and one-on-one semi-structured interviews. Participants will include youth and young adults, caregivers, clinicians, and health system administrators to capture a wide range of perspectives. Findings from Phase IA will inform system refinements, which will then be tested in Phase IB. In Phase IB, participants will interact directly with JARA and provide quantitative and qualitative feedback on usability, tone, perceived helpfulness, safety, and functionality, including whether the system appropriately supports reflection and escalation to human professional care, rather than encouraging over-reliance or excessive validation.

Insights from Phase I will guide the development of a pilot-ready version of JARA for Phase II, which will focus on real-world testing in care settings. This staged approach helps ensure JARA remains grounded in lived experience, evidence, and health system needs, laying the foundation for a scalable, person-centred digital support tool that can help close gaps in early intervention and substance use care.

What We’ve Learned (So Far)

Funding and operational capacity have been the most persistent challenges. Many available grants prioritize scaling and later-stage validation rather than early ideation or prototype development. As a result, JARA remains a side-of-desk project, and we genuinely couldn’t do it without the volunteer team’s contributions. Despite these challenges, we’re encouraged by JARA’s progress—it’s already functioning well as an early prototype, though we have not yet moved it to a cloud-based platform where its capabilities can be further refined and scaled. While this has limited our pace, it’s also been a blessing in disguise. It’s allowed us to move deliberately, listening carefully to those we’re designing for, and building with discipline and integrity.

This approach has yielded important lessons. First, trust must be co-designed intentionally; we can’t assume what youth and young adults need or want without asking them directly. We’re involving lived-experience voices, family members, harm reduction workers, psychologists, addiction experts, AI experts, and system leaders from the start, and we’ll continue to do so at every stage. Second, safety must be built into JARA’s functionality and rigorously tested—not treated as a simple “disclaimer.” We’ve also come to see resource navigation not as a static feature but as an ongoing operational capability: services change, systems shift, and information must be updated to remain useful and safe. Finally, we’re building evidence methodically, step by step; starting with usability, safety, and real-world need, and then progressing to larger clinical trials across various settings.

Our Team

JARA is supported by a small but mighty team of volunteers and researchers. This includes seven student interns with diverse backgrounds, including neuroscience, pre-med, AI engineering, public health, and business. Dr. Andy Greenshaw is the principal investigator, Dr. Adam Abba-Aji and Dr. Osmar Zaiane are co-investigators at the University of Alberta, with guidance from a multidisciplinary advisory board. We’re actively seeking support in all areas, investors, new advisory and implementation partners who share our vision.

Looking Ahead

In the coming months, we will finalize our scoping review, proceeed with Phase IA and Phase IB data collection, and manuscript preparationFindings from phase I will be used to refine the prototype, improve usability, and prepare for a randomized controlled trial (phase II), along with collecting real-world evidence. At the same time, we’re exploring funding and partnership models to support staged implementation, starting in Canada and expanding to the United States as the model matures. This staggered approach reflects our commitment to responsible scaling. We aim to ensure that JARA aligns with local systems, respects privacy and governance requirements, and delivers real value to youth and young adults.

Connect with us

We’re eager to connect with individuals and organizations interested in three key areas: (1) implementation partnerships; (2) evaluation and pilot testing collaboration; and (3) clinical and operational advisory input to guide responsible expansion. If you’re curious, concerned, or inspired, we’re more than happy to connect anytime.

Disclosures

JARA is a prototype under development. It is not intended to diagnose, treat, or replace professional clinical care. This case study was prepared by the author and only reflects the author’s views. The views expressed do not represent those of any employer or affiliated institution.

Appendix